How to Reach Us

Journalists may reach the media relations team at 206-543-3620 from 8 a.m. to 5 p.m. PT or via email: mediarelations@uw.edu. For urgent media requests after business hours, reach our on-call representative at 206-669-0164.

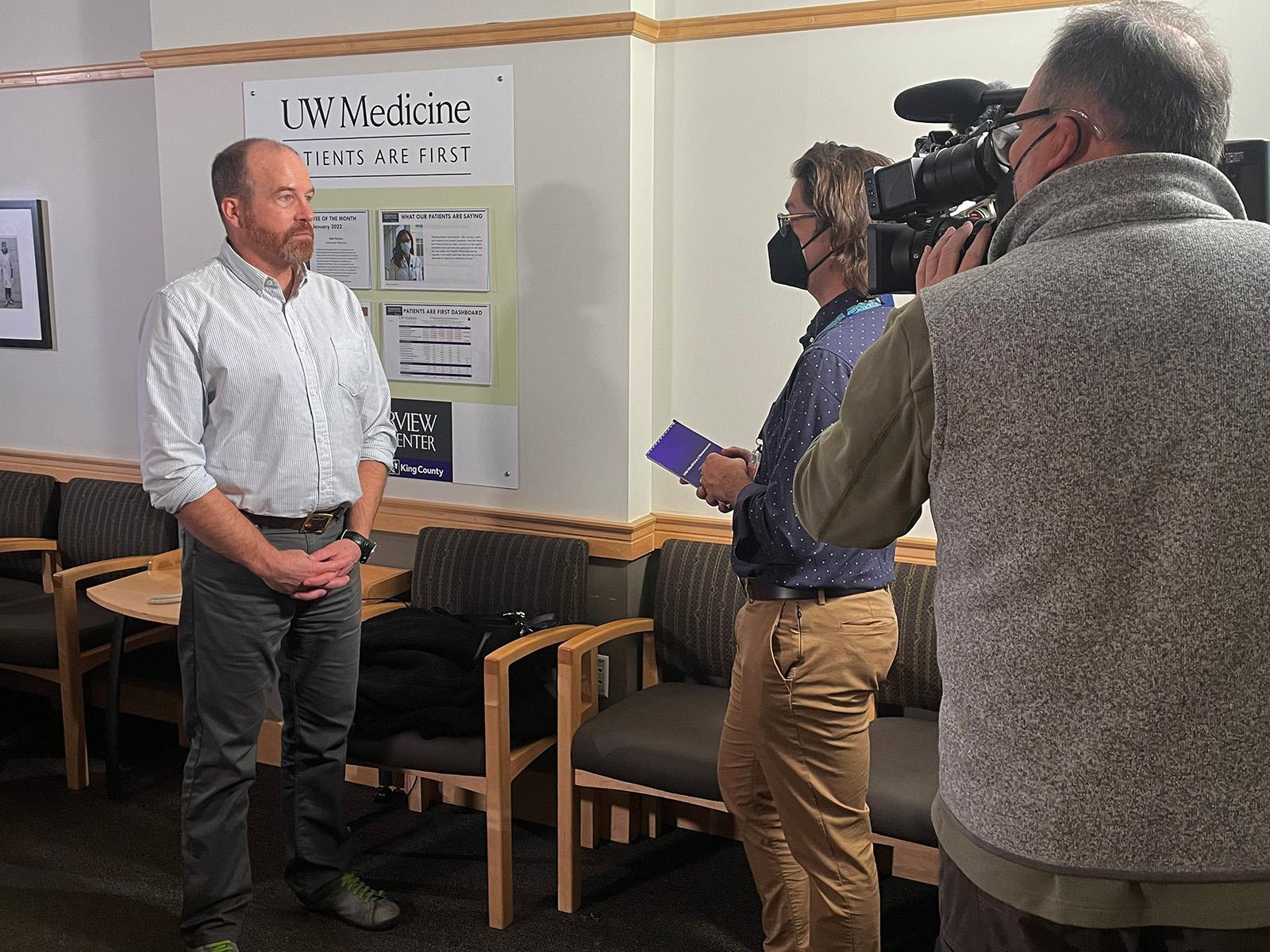

If you need us to record an on-camera interview with a UW Medicine expert, please email your request with specific details.

Contact Us

Reporters: For interviews with clinicians, researchers and instructors, reach our team. mediarelations@uw.edu